The single workspace for everyone who touches your warehouse.

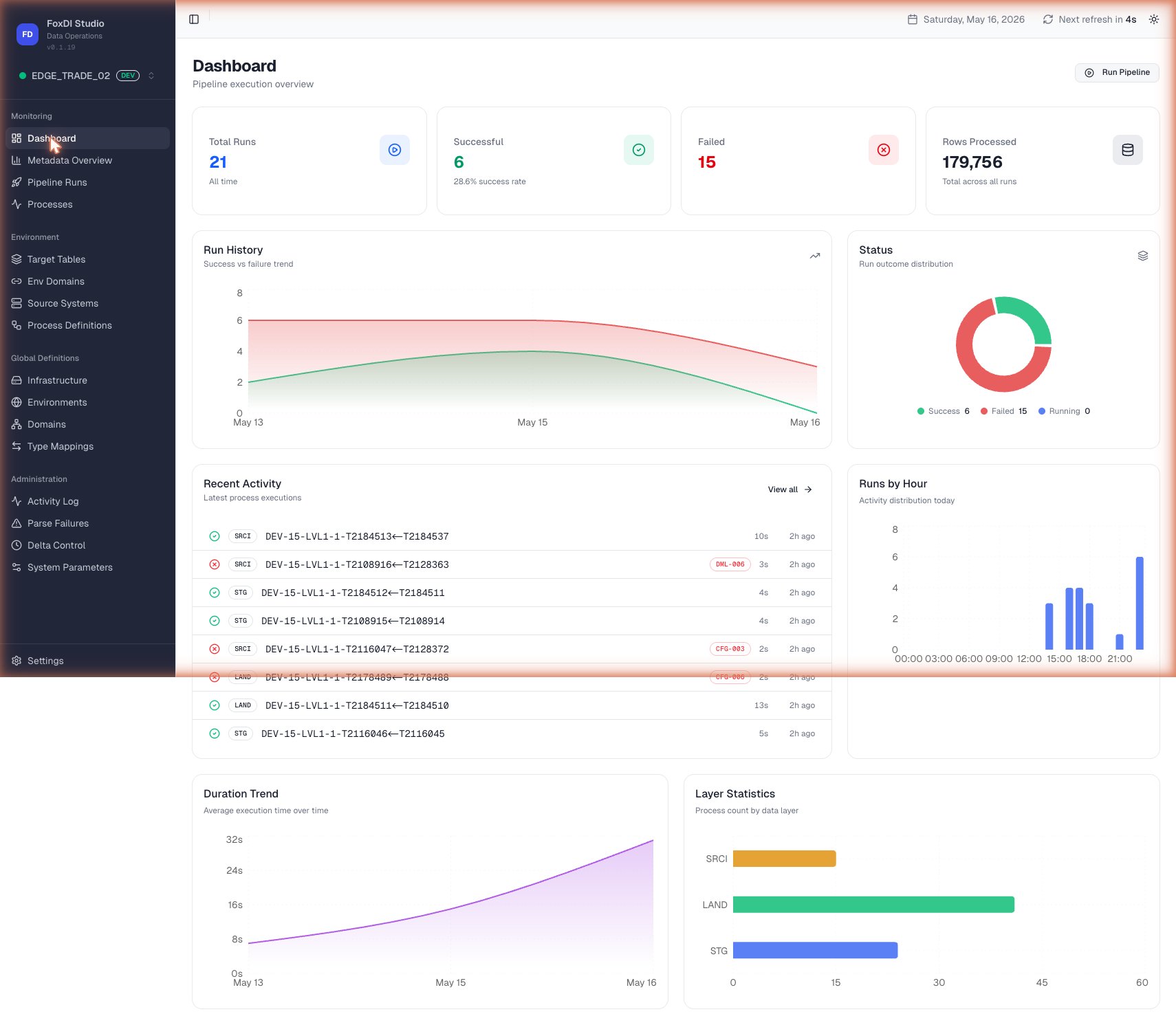

A modern web interface where data engineers define the work, data leaders monitor it in real time, and everyone shares the same view of what's running, what's passing, and what needs attention.

Live monitoring

One dashboard for KPIs, table health, and the runs currently in flight.

Preview before you run

See exactly what FluxDI will do — every step, every table — before anything executes.

Visual table editor

Define columns, keys, and meaning in a spreadsheet-style grid. The platform handles the rest.

Live progress & alerts

Watch each table load in real time. See errors the moment they happen.

Safer environments

Color-coded Production, UAT, and Dev — so nobody ever runs the wrong thing in the wrong place.

Bring your existing catalog

Upload a spreadsheet of your existing tables and FluxDI imports them in seconds.